Why Everyone Is Confusing Vibe Coding and Agentic Coding Right Now

Both terms exploded across developer Twitter, job postings, and AI tool documentation within the same twelve-month window. That timing is the core problem.

When two new concepts land simultaneously and both involve AI writing code, readers naturally collapse them into one idea. They are not the same idea.

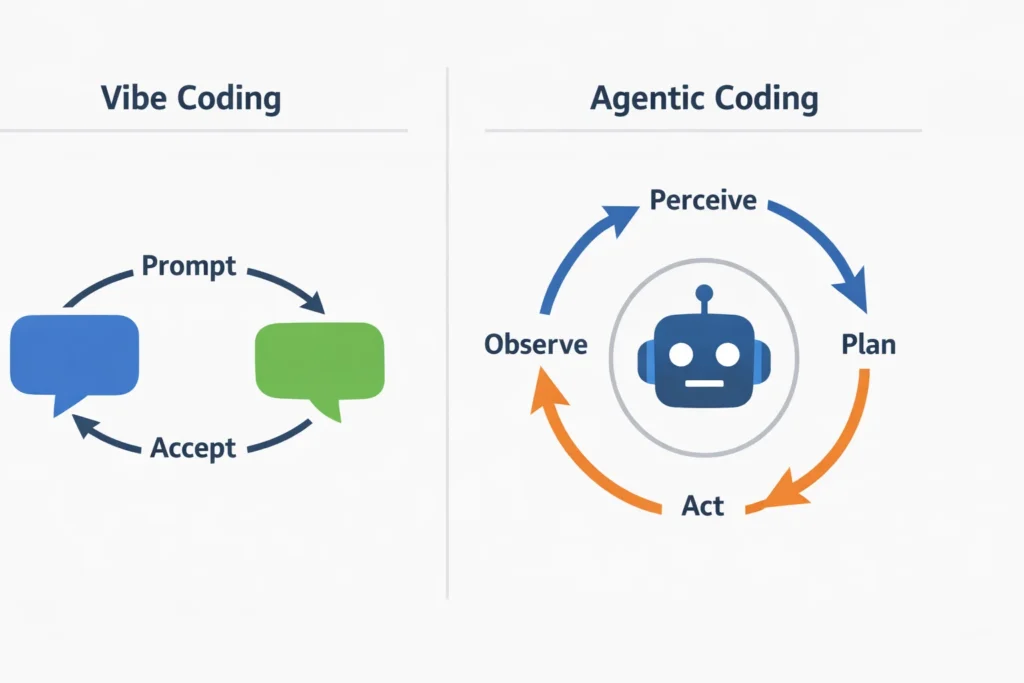

Andrej Karpathy introduced “vibe coding” in early 2025 to describe a specific, casual relationship with AI-generated code — one where the human stops reading the output and just rides the wave. Agentic coding describes something structurally different: an AI system that plans, executes, and self-corrects across multiple steps without waiting for a human to approve each one. The confusion is understandable. The distinction matters.

What Is Vibe Coding? (And What It Actually Means in Practice)

Vibe coding is when you use AI to generate code without reading or deeply understanding it, prioritizing speed and output over comprehension or control.

Karpathy described it plainly: you forget that the code even exists. You prompt, the model generates, you accept, you move forward. If something breaks, you paste the error back into the chat and accept the fix. You never audit the logic. You never own the implementation.

That description is not a criticism. It is a workflow. And for a specific class of user and task, it works.

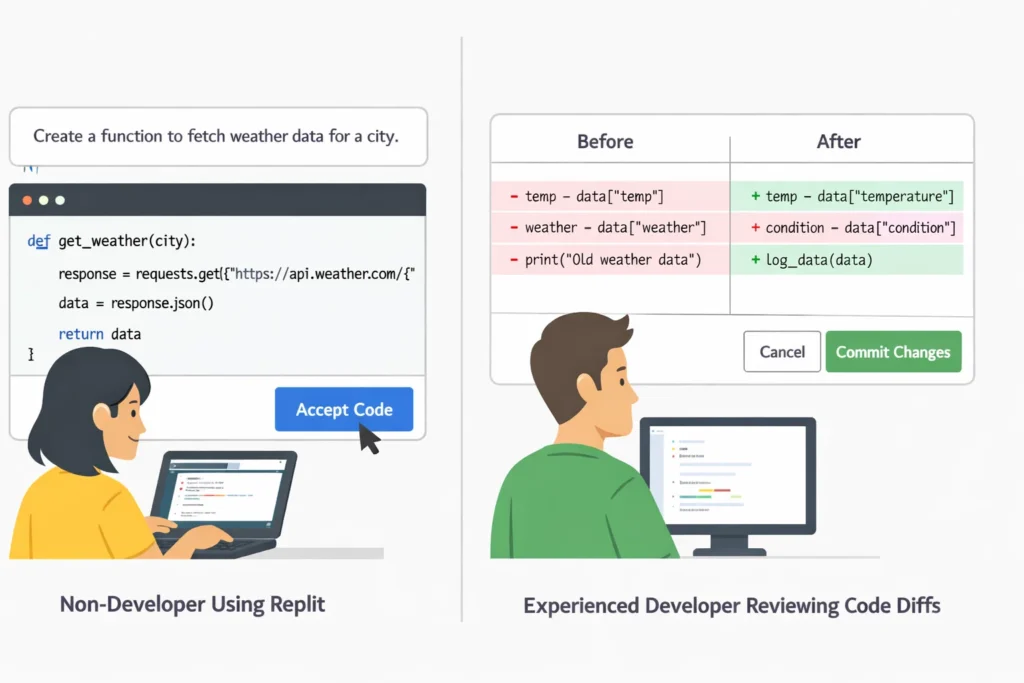

Imagine someone with no computer science background who wants to build a personal portfolio site. They open Cursor or Replit AI, describe what they want, and accept whatever comes out. They iterate by describing symptoms, not by reading stack traces. The portfolio gets built. They never understood a line of the code. That is vibe coding in its clearest form.

The workflow looks like this: write a natural-language prompt, accept the generated code, test it by using it, describe what went wrong, accept the fix, repeat.

Where vibe coding breaks down is predictable. When output quality matters — when security vulnerabilities, performance regressions, or architectural decisions are on the table — accepting code without reading it becomes a liability. Production systems built entirely through vibe coding tend to accumulate invisible debt fast.

The user this approach suits best: a non-technical builder who needs a working prototype quickly and is not deploying to a context where code quality is critical.

What Is Agentic Coding? (It’s Not Just AI Writing Code for You)

Agentic coding is when an AI system autonomously plans, writes, executes, and corrects code across multiple steps, with the developer overseeing outcomes rather than directing each action.

The word “agentic” is doing real work here. It refers to the agent loop: the system perceives a task, makes a plan, takes an action, observes the result, and adjusts. That loop runs repeatedly, without a human prompt triggering each cycle.

In practice, this means the AI reads your codebase, decomposes the task into sub-tasks, writes files, runs tests, catches failures, debugs them, and reports back when done — or when it is stuck. The developer sets the goal and reviews the outcome. They do not write the prompts for each step.

This is mechanically different from vibe coding in one critical way: the AI is making decisions, not just executing instructions.

Tools built for agentic coding include Claude Code (Anthropic’s CLI agent, released in 2025), Devin from Cognition AI, and Google Jules. These systems use tool-calling to interact with file systems, terminals, browsers, and external APIs. Many now support MCP — the Model Context Protocol — which standardizes how agents connect to and use external tools. According to Anthropic’s documentation on Claude Code, the system operates directly in your terminal, understands your project context, and executes multi-step coding tasks end-to-end.

Performance benchmarks like SWE-bench measure how well agentic systems resolve real GitHub issues, giving developers a data-grounded way to compare agent capabilities rather than relying on marketing claims.

Where agentic coding breaks down: when the task scope is poorly defined, when the agent lacks sufficient context about the codebase, or when error recovery goes circular. Agents can confidently walk in the wrong direction for many steps before a human intervenes.

Vibe Coding vs Agentic Coding: The Core Difference Explained Simply

One sentence that captures it: vibe coding is human-directed and AI-executed, while agentic coding is AI-directed and AI-executed with human oversight at the boundaries.

The distinction lives in who is making decisions mid-task. In vibe coding, the human remains the decision-maker — they just outsource the implementation and stop auditing it. In agentic coding, the AI makes the intermediate decisions: what to build, in what order, how to fix errors. The human sets the objective and reviews the final output.

| Vibe Coding | Agentic Coding | |

|---|---|---|

| Control Level | Human-directed | AI-directed with human oversight |

| Best For | Prototyping, non-critical builds, non-developers | Complex multi-file tasks, production workflows, experienced developers |

| Typical Tools | Cursor, Replit AI, GitHub Copilot | Claude Code, Devin, Google Jules |

| Task Type | Single-session, conversational | Multi-step, autonomous execution |

| Main Risk | Unreviewed code, invisible technical debt | Scope drift, compounding errors without intervention |

| User Type | Non-technical builders, rapid prototypers | Solo developers, engineering teams |

The autonomy spectrum makes this intuitive. On one end: a human writes all the code. Moving right: AI assists with suggestions (Copilot). Further right: vibe coding, where the human prompts and accepts but stays in the loop at each turn. Further still: agentic coding, where the AI runs a full loop across many steps. At the far end: fully autonomous systems that operate without human checkpoints at all. Neither vibe nor agentic coding sits at that far extreme — but they occupy very different positions on that spectrum.

A plain-language summary for quick reference: vibe coding is when you use AI to generate code without reading or deeply understanding it — prioritizing speed over control. Agentic coding is when an AI system autonomously plans, writes, executes, and corrects code across multiple steps, with the developer overseeing outcomes rather than writing prompts for each action.

What Does Each Approach Look Like in a Real Workflow?

Take a concrete task: add user authentication to a web app.

Via vibe coding:

You open your AI coding tool of choice and type: “Add email and password authentication to my Express app. Use JWT tokens. Store users in a Postgres database.”

The model generates a block of code. You paste it in. Something breaks. You paste the error message back. It generates a fix. You accept it. You test by actually trying to log in. It works. You move on.

You now have authentication. You did not read the token expiry logic. You did not check the password hashing algorithm. You do not know if refresh tokens are implemented. If you are building a personal project, that might be acceptable. If this is handling real user credentials, it is not.

Via agentic coding:

You open Claude Code in your terminal or a tool like Devin and describe the goal: “Implement JWT-based email authentication with secure password storage. Write tests. Do not break existing routes.”

The agent reads your existing codebase. It identifies your current route structure, your database schema, and your existing middleware. It creates a plan — new model files, updated route handlers, migration scripts, test files. It executes each step, runs your test suite after each change, catches the failure when a route conflict appears, and resolves it. It reports back with a summary of what it changed and which tests pass.

You review a diff. You did not write a single prompt after the first one.

The same task. Fundamentally different experience of control, transparency, and cognitive involvement.

Which One Should You Use? A Simple Decision Framework

The right answer depends on three variables: your technical skill level, your task complexity, and how much you need to own and understand the output.

Three profiles cover most real users:

The Non-Technical Builder. You are building something for your own use or for a small audience. Speed matters more than code quality. You will not maintain this codebase long-term and you do not need to audit what the AI writes. Vibe coding fits you well. Use Cursor, Replit AI, or a chat-based interface like Claude.ai. Accept that you are trading understanding for speed, and keep the stakes of the project low enough that this trade makes sense.

The Solo Developer. You know how to read code and you care about what gets committed to your repository. You want AI to accelerate your work, not replace your judgment. For quick, single-file tasks or exploratory work, vibe coding with active review serves you well. For larger refactors, cross-file changes, or tasks involving test generation and error handling, agentic tools like Claude Code give you speed without asking you to stop thinking. You stay in the loop at the objective level while the agent handles execution.

The Engineering Team Lead. You need AI that fits into an existing workflow, respects codebase conventions, integrates with CI/CD, and produces reviewable diffs. Agentic coding tools built for professional development contexts are the right tier. Vibe coding at the team level, with code going unreviewed into shared repositories, introduces risk that compounds quickly. Define your team’s human-in-the-loop checkpoints clearly before deploying any agentic tooling in a shared codebase.

A direct rule of thumb: if output quality has real consequences and you are the person accountable for the code, you need to understand what was generated. That points toward agentic tooling where review is built into the workflow, not vibe coding where review is optional and usually skipped.

Which Tools Are Built for Vibe Coding and Which Are Built for Agentic Coding?

The tools are not interchangeable, and most were designed with one approach in mind.

Vibe coding tools optimize for conversational, prompt-and-accept interaction. GitHub Copilot remains the most widely used — inline suggestions and chat-based generation inside your editor. Cursor builds on the same model with a stronger emphasis on context-aware code editing and is popular among developers who vibe code but still want to see the output. Replit AI targets non-developers directly, wrapping AI generation inside a browser-based environment with no local setup required.

Agentic coding tools are built for multi-step autonomous execution. Claude Code, released by Anthropic in 2025, operates as a CLI agent that reads your local files, runs terminal commands, and executes end-to-end coding tasks with full codebase awareness — documentation is available at Anthropic’s Claude Code page. Devin from Cognition AI was among the first systems marketed explicitly as an autonomous software engineer, capable of browsing documentation, writing and running code, and filing pull requests. Google Jules, announced in 2025, targets asynchronous coding tasks directly integrated with GitHub repositories.

MCP — the Model Context Protocol — is worth understanding separately. It is the emerging standard for how agentic systems connect to external tools, databases, and APIs. Agents that support MCP can interact with your file system, run browser sessions, query databases, and call external services within a single task loop. It makes agentic tools significantly more capable in real-world codebases.

For the [best AI coding tools for developers in 2025], a dedicated comparison across these tools with current benchmark data gives a more granular view than this section allows.

OpenAI’s o3 model also performs notably well on coding benchmarks and powers several coding-focused integrations, though it is more commonly accessed through API or ChatGPT than through a standalone coding agent.

Does This Change What It Means to Be a Developer in 2026?

Yes, but not in the direction most headlines suggest.

Agentic coding does not eliminate the need for developers. It shifts what developers spend their time doing. Writing boilerplate, generating tests, handling repetitive refactors, and translating specs into initial implementations — these tasks increasingly belong to agents. Defining objectives clearly, reviewing architectural decisions, catching failure modes before they compound, and understanding the codebase deeply enough to set agents on the right path — these remain human responsibilities.

The GitHub Octoverse 2024 report documented that AI tools are most impactful when developers already understand the domain. That finding holds here: agentic coding amplifies competence, it does not substitute for it.

What actually changes is cognitive load distribution. Developers in 2026 who work well with agentic tools spend less time in implementation and more time in specification, review, and system design. That is a meaningful shift in what a workday looks like — but it is not obsolescence.

Vibe coding, by contrast, opens software creation to people who were previously locked out entirely. That expansion is real and has genuine value. It does not, however, produce the same quality of output as a skilled developer using agentic tools with strong review practices.

The practical implication: developers who understand the agent loop, can write precise task specifications, and can review agentic output efficiently will have a structural advantage in the tooling landscape of the next few years. The skill is not learning to prompt a chatbot. It is knowing enough to catch what the agent gets wrong.

FAQ

No. AI-assisted coding is a broad umbrella that covers any use of AI in a coding workflow — from autocomplete suggestions to full code generation. Vibe coding is a specific mindset within that umbrella: the deliberate choice to accept AI output without reading or deeply understanding it. All vibe coding is AI-assisted coding. Most AI-assisted coding is not vibe coding.

In limited ways, yes, but with significant friction. Agentic tools like Devin and Claude Code are designed for users who can define tasks precisely, interpret results, and recognize when an agent has gone in the wrong direction. Without a foundation in how software systems work, a non-developer will struggle to course-correct when an agent hits a wall — which it will. Vibe coding tools remain the more accessible entry point for non-developers.

The agent loop is the core mechanism that makes coding “agentic.” It runs in four stages: perceive (the agent reads the current state of the codebase and task), plan (it decides what action to take next), act (it executes that action — writing a file, running a command, calling a tool), and observe (it reads the result and decides whether to continue, adjust, or report back). This loop repeats autonomously until the task is complete or the agent cannot proceed without human input.

Cursor is the current favorite among developers who vibe code but want a polished editor experience. Replit AI is the strongest option for non-developers who need a browser-based, zero-setup environment. GitHub Copilot remains the most widely integrated option for developers already inside VS Code or JetBrains IDEs. For pure conversational code generation without an IDE, Claude.ai and ChatGPT both handle vibe coding sessions well.

The current evidence says no. SWE-bench benchmarks, which measure whether agentic systems can resolve real GitHub issues, show strong progress but not parity with experienced developers on complex, novel problems. Agents perform well on well-scoped tasks with clear success criteria. They struggle with ambiguous requirements, unfamiliar codebases without documentation, and tasks that require understanding organizational context. The more likely outcome is a redefinition of developer work — less time on implementation, more time on system design and agent oversight — rather than replacement.